🫶🏻Chinese AI Companies Have a New Benchmark: Anthropic

China's AI companies have a new role model. That role model is also the most hawkish AI company on China.

On the last day of 2025, Yang Zhilin, the CEO of Moonshot AI, said in an internal memo that his company’s goal is to become the world’s leading AGI company by surpassing frontier labs like Anthropic. He didn’t mention OpenAI.

Yang wasn’t alone. Yao Shunyu, chief AI scientist at Tencent and a former OpenAI researcher, made his preferences equally clear in a panel this January in Beijing alongside former Qwen lead Justin Lin and Zhipu AI’s chief scientist Tang Jie. When Yao discussed the companies he most respected in AI, Anthropic came first.

Three years ago, that conversation looked completely different. OpenAI was the north star. Ilya Sutskever was the most admired AI scientist in China’s research community (he still is). Sam Altman was something close to a AI evangelist. Nearly every Chinese chatbot launched between 2023 and 2024 looked like a ChatGPT clone.

Today, at least among China’s AI community, Anthropic and Claude have become the new reference point. A Chinese tech media called Anthropic “白月光 white moonlight”, which in local context refers to someone from your past who was perfect, pure, and just out of reach. Interviews with Dario Amodei—his curly hair and that particular brilliant unease, the head slightly bobbing, the eyes somewhere between present and elsewhere—are the new required reading.

And yet the most admired AI company in China is also the most hawkish one on China.

The Pivot in the Models

The shift from OpenAI to Anthropic as a role model shows up not only public statements, but also in what Chinese labs are building and how they’re positioning it.

The latest models from frontier Chinese AI labs like Zhipu, MiniMax, Moonshot all focus on coding and agentic tasks, and all use Claude Opus 4.6 as their explicit benchmark competitor. AI models pivoting toward coding can drive massive productivity gains, move from content generation to autonomous action, and gain enterprise adoption.

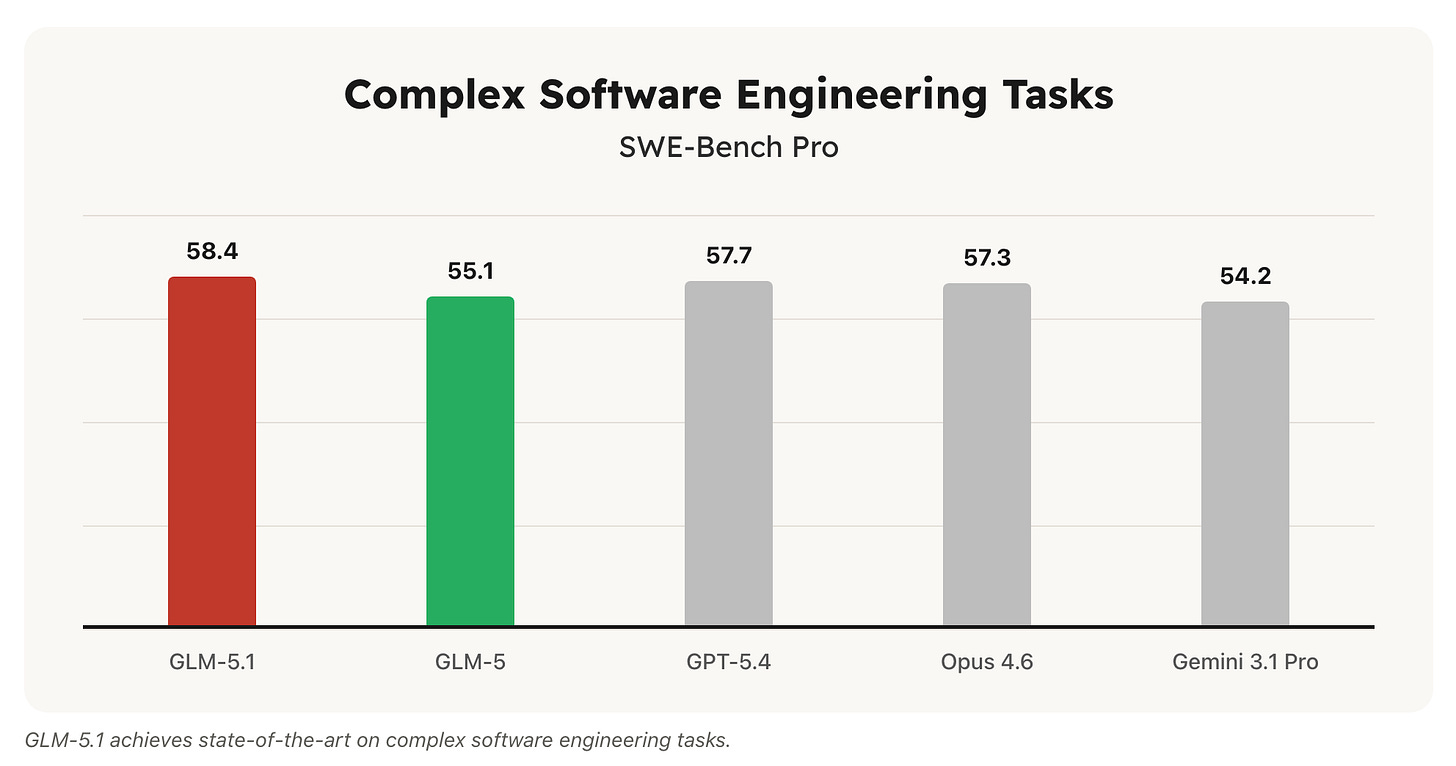

GLM-5, Zhipu’s latest flagship, is built for agentic intelligence, advanced multi-step reasoning, and complex engineering tasks—specifically targeting long-horizon agentic workflows. The updated GLM-5.1, released this month, even claims the top spot on SWE-Bench Pro at 58.4 versus Claude Opus 4.6’s 57.3.

MiniMax is even more direct about its positioning. Their M2 launch announcement markets the model as available at “only 8% of the price of Claude Sonnet and twice the speed,” and lists Claude Code as one of the primary developer workflows M2 is designed for.

The iteration pace has been speeding up: in roughly four months, MiniMax shipped M2, M2.1, M2.5, and now M2.7. The latest version can build complex agent harnesses and complete elaborate productivity tasks autonomously. During M2.7’s own development, MiniMax let the model run 100+ rounds of scaffold optimization without human intervention, achieving a 30% performance gain on internal evaluations. They’re calling it “self-evolution.”

Moonshot’s Kimi series tells a similar story. Kimi K2.6, just released this week, featuring state-of-the-art coding, long-horizon execution, and agent swarm capabilities. And Moonshot earlier launched Kimi Code—a direct rival to Claude Code—letting developers use it through their terminals or integrated with VSCode, Cursor, and Zed.

Then It’s the business model. All three companies, along with Alibaba, ByteDance, and Baidu, have launched dedicated Coding Plans, a flat-rate monthly subscription designed specifically for developers using AI coding tools like Cursor, Cline, and Claude Code.

Before 2025, Zhipu’s revenue was dominated by on-premise government and enterprise deployments. By 2025, their model-as-a-service API platform hit 1.7 billion RMB in annual recurring revenue, a 60x year-on-year increase.

In their FY 2025 earnings call this April, CEO Zhang Peng said:

Anthropic is one of the most closely watched companies in global AI. Its growth logic is very clear: focus on delivering the strongest models to enterprises and developers through APIs, and let intelligence participate in creating economic value.

When the model is strong enough, the API itself is the best business model.”

Moonshot’s numbers are even more striking. After the K2.5 launch, the company reported that cumulative revenue in fewer than 20 days already exceeded its entire 2025 annual total.

As agent adoption has accelerated, these labs are finding that pricing power finally exists. Zhipu raised its API prices by 83 percent in Q1 2026 and saw call volumes rise anyway. That is a completely different market dynamic from two years ago, when Chinese LLM providers were engaged in a price war that pushed some API costs close to zero.

Why Anthropic

Learning from Anthropic is not surprising given what the company has achieved. Anthropic’s Claude Opus 4.6—now succeeded by Opus 4.7—is widely regarded as the best model available today, particularly for coding and agentic tasks. The Mythos model, recently previewed with cybersecurity applications, has raised expectations further.

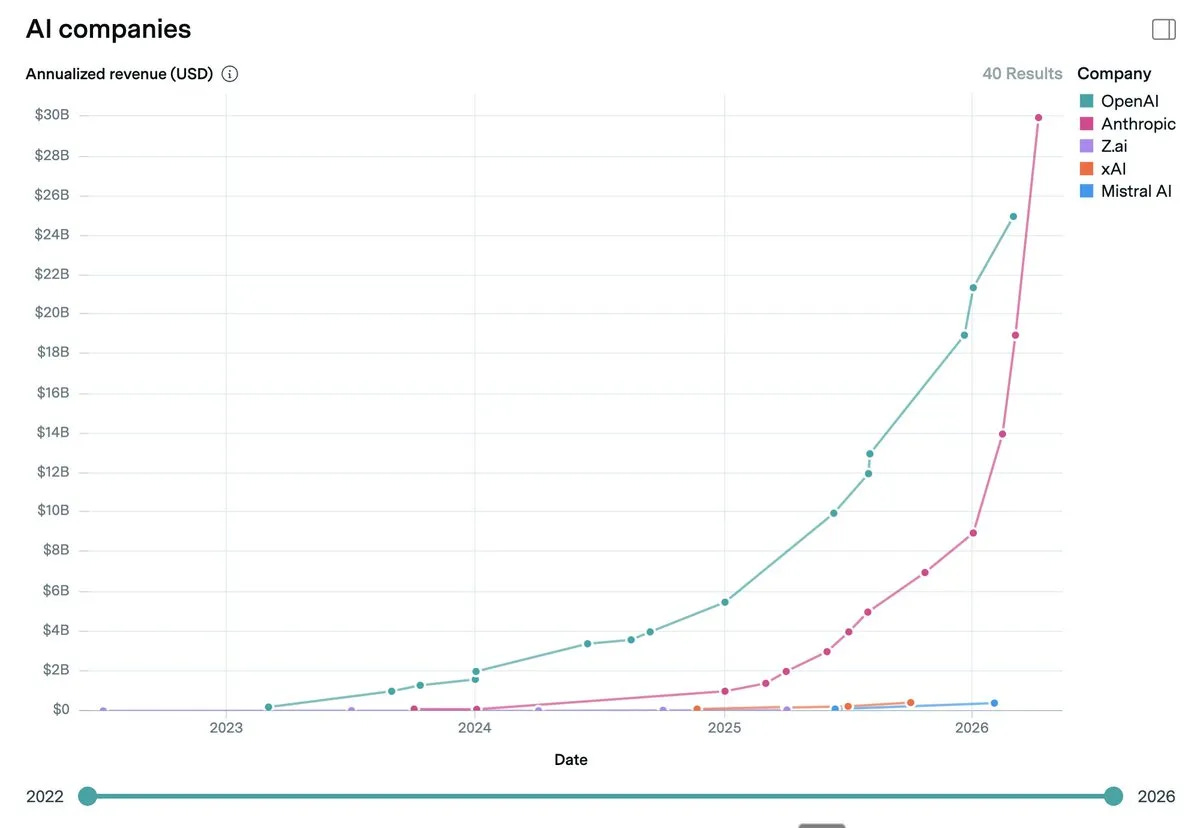

The revenue growth has been without precedent in enterprise software: Anthropic went from $1 billion ARR in December 2024 to $9 billion at end-2025 to $30 billion in April 2026, surpassing OpenAI’s $25 billion for the first time.

Anthropic also has characteristics Chinese companies specifically admire. The company is extremely focused, prioritizing text generation and coding while largely staying away from multimodality. Its top talent has barely turned over. Its corporate culture resembles a religious conviction more than a startup, with an unshakable belief in the AGI mission as the organizing principle. OpenAI, by contrast, has been losing its shine. Multiple waves of talent exodus, the shutdown of Sora, and the controversies surrounding Sam Altman have all taken a toll.

“Anthropic has taste, and they keep delivering,” a Beijing-based AI infrastructure engineer told me.

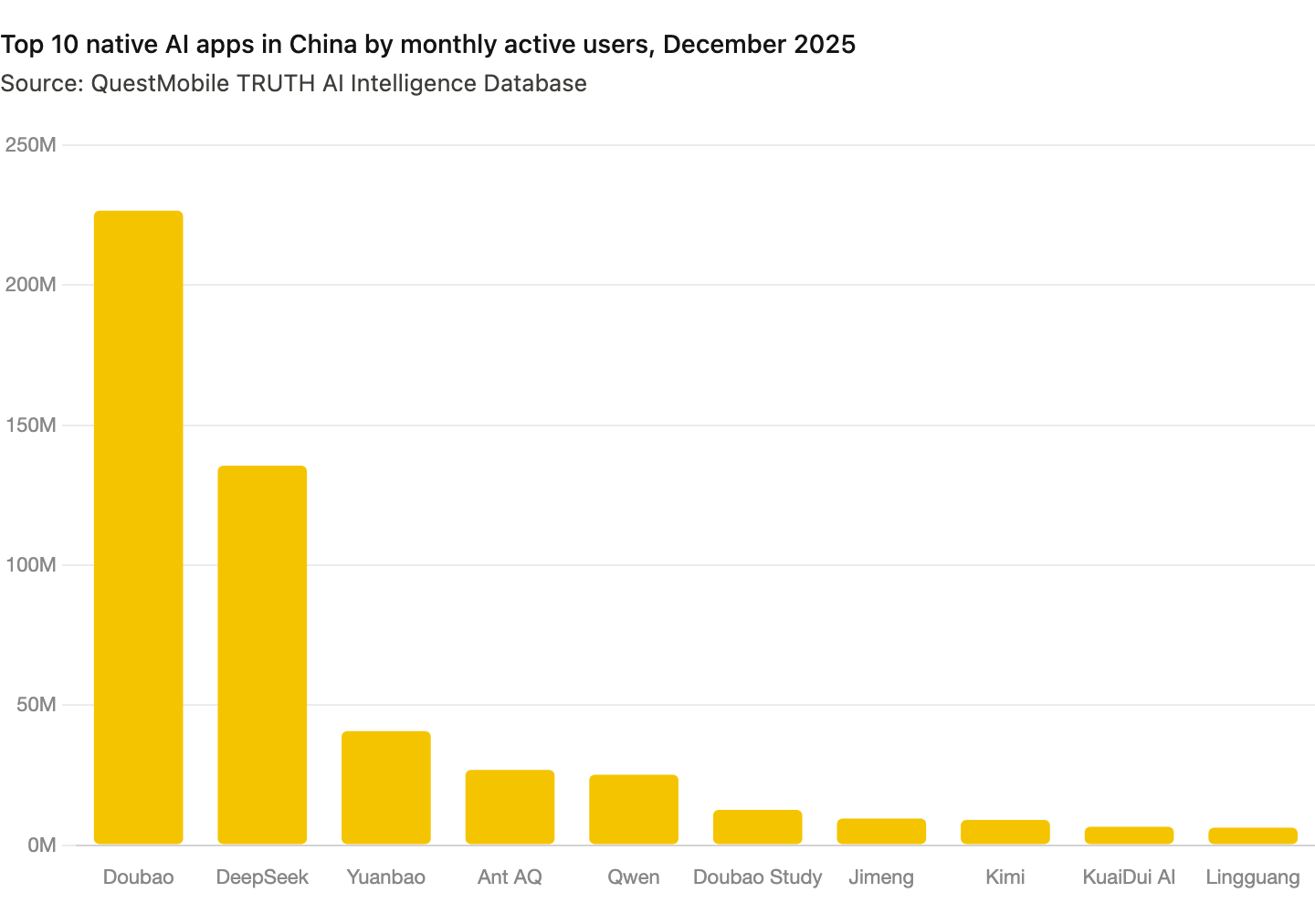

A deeper reason is most Chinese AI labs face the same challenge Anthropic faced three years ago: they have almost no chance of winning the consumer superapp race. In China, ByteDance’s Doubao has (almost) won it. By late 2025, Doubao’s daily active users dwarfed the combined consumer bases of Moonshot, Zhipu, and MiniMax. In 2024, Moonshot reportedly spent hundreds of millions of yuan promoting Kimi as a consumer product, but it didn’t work. Neither did the similar pushes from MiniMax and Zhipu.

The DeepSeek moment in early 2025 was the wake-up call. It demonstrated that relentless model capability, not user acquisition spend, was the actual path. Anthropic’s enterprise revenue explosion provided the business model proof. You don’t need to beat the consumer superapp. You need to make the best model and sell access to it. That realization has redirected nearly every serious Chinese AI lab: away from the chatbot wars, toward model research, coding tools, and token sales.

By imitating Anthropic, Chinese labs have returned to their comfort zone—heads down, doing research. And the results are showing. In Q1 2026 alone, Chinese AI labs released probably more frontier-capable models than in all of 2025. Model iteration has accelerated from quarterly to nearly monthly.

It also means more aggressive globalization. Western developers and enterprises pay more for API access, and Chinese models priced at a fraction of Western alternatives are finding real traction. OpenRouter data from early 2026 showed Chinese open-source models accounting for over 60 percent of total token consumption on the platform.

The Anthropic playbook does have one complication as Chinese labs absorb it. As commercial pressure increases, open-sourcing the best models becomes harder to justify. For example, MiniMax just removed the commercial license from its M2.7 model. Financial Times also reported that Alibaba is shifting towards revenue over open-source models.

Open-source and open-weight releases remain important for Chinese LLMs—GLM-5.1 and Qwen 3.6 are both open—but the trend line is toward keeping the frontier models closer to the chest.

This is another page taken directly from the Anthropic playbook: Claude has never been open-sourced.

The Strange Relationship

Anthropic is not like OpenAI in how it has engaged with China. In 2023, Sam Altman gave interviews to Chinese media, spoke at a conference organized in Beijing, and spoke highly of China’s AI community, “China has some of the best AI talent in the world.”

Dario is among the most hawkish AI CEOs on China in the industry. In his famous essay Machines of Loving Grace, he proposed an “entente” strategy, a coalition of democratic nations using advanced AI in military applications to achieve a decisive advantage over adversaries, and described the US-China relationship as a new Cold War.

Twenty years ago US policymakers believed that free trade with China would cause it to liberalize as it became richer. That very much didn’t happen.”

At the Axios AI+ DC Summit in September 2025, he said “it is mortgaging our future as a country to sell these chips to China.” At Davos in January 2026, he compared shipping Nvidia H200s to China to handing nuclear weapons to North Korea. In February 2026, Anthropic accused DeepSeek, MiniMax, and Moonshot of using fraudulent accounts to generate millions of conversations with Claude to train its own models.

The irony is personal as well as geopolitical. Dario Amodei worked at Baidu from November 2014 to October 2015, before his time at Google Brain and then OpenAI. The man who spent a year inside one of China’s flagship AI companies is now the most vocal opponent of Chinese AI development among major Western AI executives. Chinese netizens have not missed this. The joke in Chinese tech circles is to wonder what Robin Li, Baidu co-founder and CEO, did to Dario during that year to turn him so decisively against China.

Anthropic has also translated its stance into policy and product. It prohibits users in China from accessing its services. It has recently added Know Your Customer controls to restrict who can access the API.

I don’t know what drives Dario’s position—whether it’s ideology, genuine security concern, or something else entirely. But whatever the motivation, the competitive reality is harder to separate from the principle. Anthropic is competing against Chinese AI companies globally. So far it has seen minimal business impact from Chinese open source models, according to Dario. But Cursor's latest model is built on Kimi K2.5, which means Chinese AI is already inside one of the most popular coding tools that developers use instead of Claude.

Anthropic recently removed Claude Code from its $20 Pro subscription plan. The company says it only affects a small number of new users, but the developer community is unhappy. In forums, frustrated subscribers said Anthropic was nudging them toward cheaper Chinese alternatives.

The Decoupling Problem

On one hand, the more successfully Chinese labs learn from the Anthropic playbook, the more capable they become as global competitors. On the other hand, Dario’s export controls are meant to slow Chinese AI down. The obstacle and the role model are the same company.

In the meantime, Dario’s worldview—AI as the new nuclear weapons, US and China in active technological Cold War—is increasingly becoming the assumption of US policy. In that narrative, it doesn’t matter how good the model is. Zhipu is already on the US entity list.

That narrative is spreading across Silicon Valley as well. In a recent interview on the Dwarkesh Podcast, host Dwarkesh Patel pressed Nvidia CEO Jensen Huang on whether selling advanced GPUs to China undermines US strategic interests—the kind of question that reflects exactly the framing Dario has helped normalize.

That is why Chinese researchers and executives have mixed feelings about Anthropic. They admire the models, the discipline, the revenue growth. They are learning from the business as fast as they can. But they cannot accept the premise—that China’s AI development is inherently dangerous, that containment is the right policy, that the race is zero-sum.

If AI safety is the genuine concern for Anthropic, the answer is collaboration: working with Chinese researchers, mapping shared risks, building toward a global AI safety council. Instead, Anthropic advocates for the kind of decoupling that could shut that conversation down entirely. You can believe in AI safety and still ask whether export controls advance it—or whether they mostly just advance Anthropic.

This is a great read! It hits on the love-hate relationship between the Chinese tech industry and Anthropic. Claude definitey offers one of the highest performing models on the user market. No doubt Claude Code significantly boosts productivity. However, I believe Dario's repeated emphasis on his anti-China rhetoric does nothing to advance business or technology.

A tremendous nuanced and granular account of how Anthropic became the lodestar of China’s frontier AI companies.

Worth adding what travels with the capabilities.

Moonshot’s admiration shows up in Kimi’s prose, not merely on its leaderboards.

Ask Kimi in English about any subject the American academy has marked sensitive and out tumbles the moral cowardice of the prestige press, the patois of flattens, reifies, structural patterns of harm, the dialect a generation of editors and tenure committees perfected for rendering verdicts without ever owning them.

It is not a Chinese register.

It crossed the Pacific inside the English corpus, smuggled in by the American raters whose squeamishness that corpus enshrines.

A Beijing model addressing a Chinese user in the accents of a Park Slope faculty lounge is not at any frontier.